So lately we have been spending a lot of our time exploring what people are calling “Agentic AI” — where instead of just one AI model doing everything, you have multiple AI agents working together like a team. Honestly, it sounded a bit over-hyped to us at first, but after spending some weekends actually trying to build one, we am now quite convinced this is the real direction things are going.

Let me share what I understood and what we built.

What Even Is a Multi-Agent System?

A Multi-Agent System (MAS) is basically a collection of small, autonomous software programs - called agents - that work together to complete a bigger task. Each agent has its own job, its own logic, and its own decision-making. They don't rely on one big central brain to do everything.

Think of it like a company. You have one person for data collection, one for analysis, one for report writing - and they all communicate with each other to get the job done. In the software world, these agents are often powered by LLMs (Large Language Models) or some specialized ML pipelines.

The main advantage here is distributed intelligence. There's no single point of failure. If one agent goes down, the whole system doesn't crash. This is something very different from the traditional monolithic approach where everything is tightly coupled together.

Why We Chose gRPC and n8n

When we started thinking about how to connect multiple agents, two problems immediately hit me:

- How do agents talk to each other efficiently? – If I use normal REST/HTTP APIs, it will become slow very fast, especially when agents need to pass large data or do streaming.

- How do we manage the whole workflow? – Hardcoding “Agent A calls Agent B calls Agent C” in Python is messy and not maintainable.

After some research, we settled on gRPC for communication and n8n for orchestration.

gRPC is built on top of HTTP/2 and uses protobuf (Protocol Buffers) for binary serialization. This makes it much faster than JSON-based REST. It also natively supports bidirectional streaming, which is perfect for agent-to-agent (A2A) communication. And since I work mostly in Python, the grpcio library made it straightforward to set up.

n8n is an open-source workflow automation tool - kind of like Zapier but self-hosted and much more powerful for custom use cases. You can build visual workflows, add conditional logic, retry failed steps, and even embed LLM calls directly. No need to write a full backend just for orchestration.

Together these two tools give you a very solid foundation for building production-ready multi-agent systems.

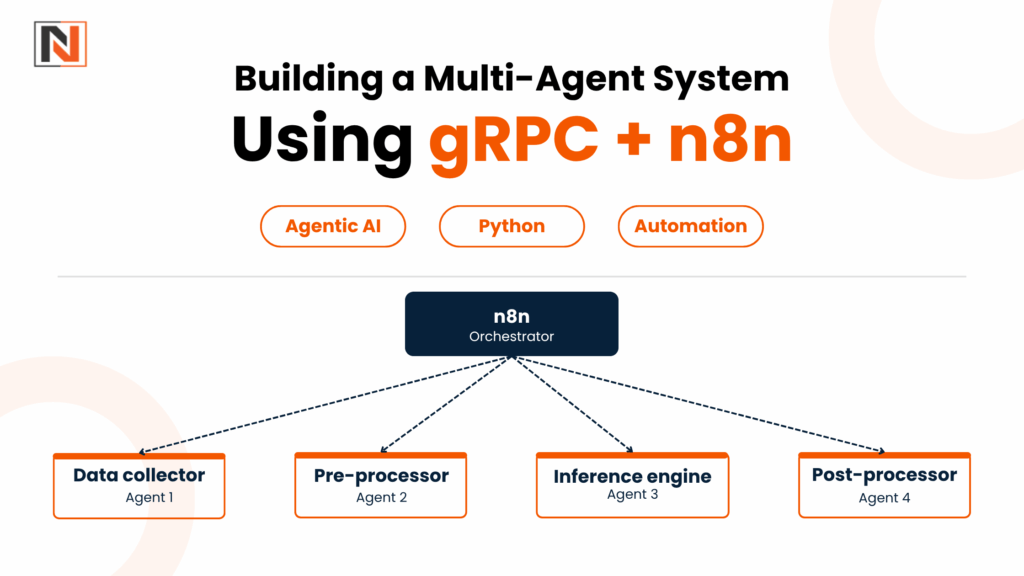

The Core Roles of Agents

In our setup, we defined four types of agents (this is a pretty standard pattern):

- Data Collector – Fetches raw data from APIs, databases, or sensors

- Pre–processor – Cleans and normalizes the incoming data

- Inference Engine – Calls an LLM or ML model to generate insights

- Post–processor – Formats the output, writes to storage, or triggers next action

By defining these roles clearly in protobuf message formats, the contract between agents becomes very explicit. This is important – if you change one agent’s output format, the protobuf definition will catch the mismatch immediately.

Communication Patterns

There are three main ways agents can talk to each other:

- Request–Response – One agent calls another and waits for result. Simple and common.

- Streaming – One agent continuously sends data to another. Useful for real–time stuff.

- Publish–Subscribe – An agent broadcasts an event and any interested agent can listen. This gives loose coupling.

n8n handles the orchestration layer – meaning it decides who calls whom, in what order, with what retry logic. You don’t need to hardcode this in your agent code.

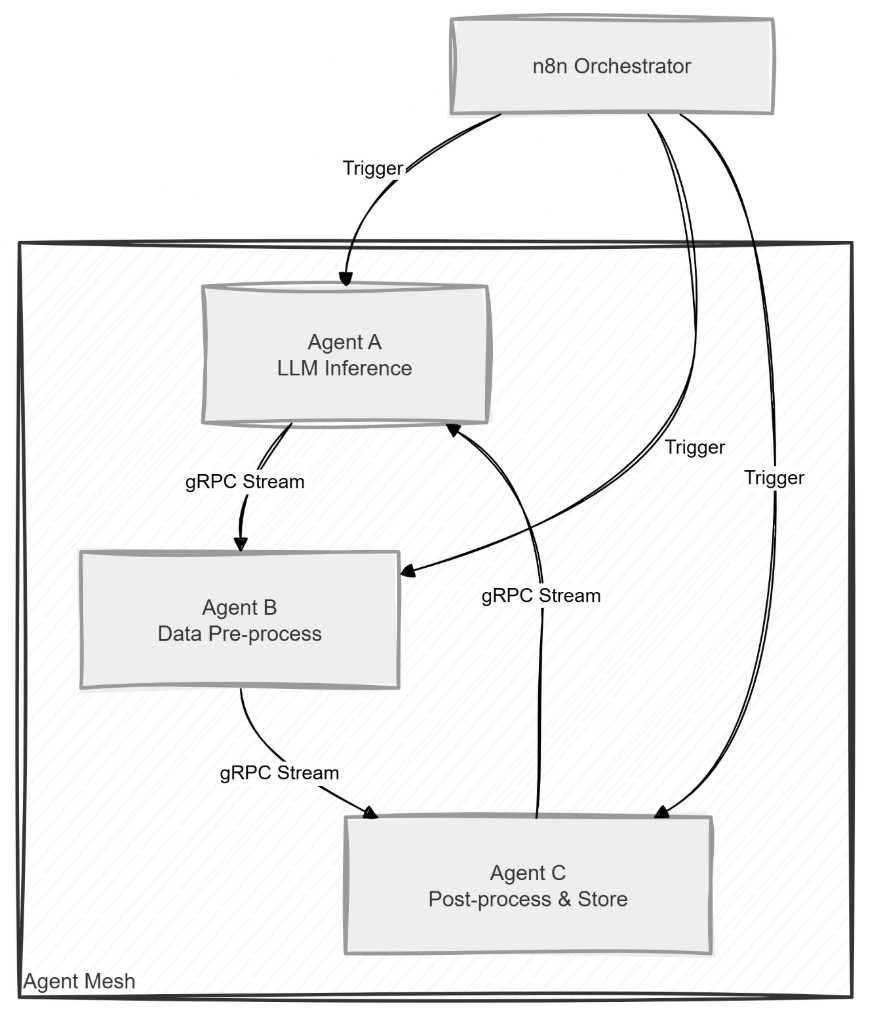

What Is an Agent Mesh?

One concept we found really interesting is the agent mesh. Instead of a star topology where one central agent controls everything, in a mesh every agent can talk directly to multiple other agents.

Benefits are:

- Resilience – One agent failing doesn’t break the whole system

- Load Balancing – Work can shift dynamically to less busy agents

- Scalability – Adding a new agent just means adding a new node to the mesh

When you combine this with gRPC’s streaming, the mesh becomes a high–throughput backbone that can handle real AI workloads.

Setting Things Up - The Code

The Proto File (The Contract Between Agents)

First thing is to define the .proto file. This is the shared contract - both the server and client use the same file:

syntax = “proto3”;

package agent;

message ProcessRequest {

string raw = 1;

}

message ProcessResponse {

string cleaned = 1;

}

message InferenceRequest {

string data = 1;

}

message InferenceResponse {

string output = 1;

}

service AgentService {

rpc ProcessData (ProcessRequest) returns (ProcessResponse);

rpc RunInference (InferenceRequest) returns (InferenceResponse);

}

Simple enough. Two RPCs – one for preprocessing, one for inference.

Basic Python gRPC Agent Server

# python_grpc_service.py

import grpc

from concurrent import futures

import agent_pb2

import agent_pb2_grpc

class AgentService(agent_pb2_grpc.AgentServiceServicer):

def ProcessData(self, request, context):

cleaned = request.raw.strip().lower()

return agent_pb2.ProcessResponse(cleaned=cleaned)

def RunInference(self, request, context):

output = f”Processed: {request.data}”

return agent_pb2.InferenceResponse(output=output)

def serve():

server = grpc.server(futures.ThreadPoolExecutor(max_workers=10))

agent_pb2_grpc.add_AgentServiceServicer_to_server(AgentService(), server)

server.add_insecure_port(‘[::]:50051’)

server.start()

print(“gRPC server running on port 50051”)

server.wait_for_termination()

if __name__ == ‘__main__’:

serve()

This is a synchronous server – fine for simple cases. But for production with many concurrent agent calls, you’d want the async version.

Async gRPC Server (Better for Production)

# async_grpc_server.py

import asyncio

import grpc

import agent_pb2

import agent_pb2_grpc

class AsyncAgentService(agent_pb2_grpc.AgentServiceServicer):

async def ProcessData(self, request, context):

cleaned = request.raw.strip().lower()

return agent_pb2.ProcessResponse(cleaned=cleaned)

async def RunInference(self, request, context):

await asyncio.sleep(0.1) # simulating async inference call

output = f”Async result for {request.data}”

return agent_pb2.InferenceResponse(output=output)

async def serve():

server = grpc.aio.server()

agent_pb2_grpc.add_AgentServiceServicer_to_server(AsyncAgentService(), server)

listen_addr = ‘[::]:50052’

server.add_insecure_port(listen_addr)

await server.start()

print(f”Async gRPC server listening on {listen_addr}”)

await server.wait_for_termination()

if __name__ == ‘__main__’:

asyncio.run(serve())

The grpc.aio module is the way to go for async. It integrates cleanly with Python’s asyncio event loop, so your agents won’t block each other while waiting for responses.

Orchestrating With n8n

For n8n, we created a custom Function node that calls two agents in sequence - first the preprocessor, then the inference engine:

// n8n Function Node: Orchestrate two gRPC AI agents

const grpc = require(‘@grpc/grpc-js’);

const protoLoader = require(‘@grpc/proto-loader’);

const packageDef = protoLoader.loadSync(‘agent.proto’, {

keepCase: true, longs: String, enums: String, defaults: true, oneofs: true,

});

const agentProto = grpc.loadPackageDefinition(packageDef).agent;

const preprocessClient = new agentProto.AgentService(‘localhost:50051’, grpc.credentials.createInsecure());

const inferenceClient = new agentProto.AgentService(‘localhost:50052’, grpc.credentials.createInsecure());

function callAgent(client, method, request) {

return new Promise((resolve, reject) => {

client[method](request, (err, response) => {

if (err) reject(err);

else resolve(response);

});

});

}

async function orchestrate() {

try {

const preprocessed = await callAgent(preprocessClient, ‘ProcessData’, { raw: $json.input });

const result = await callAgent(inferenceClient, ‘RunInference’, { data: preprocessed.cleaned });

return [{ json: { inference: result.output } }];

} catch (error) {

throw new Error(`Agent orchestration failed: ${error.message}`);

}

}

return orchestrate();

This keeps the agent code clean – the agents just do their own job, and n8n decides the flow. If you want to add a third agent later, you just add one more step in n8n’s canvas. No code changes needed in existing agents.

Bidirectional Streaming - The Really Cool Part

This is where things got interesting for me. Bidirectional streaming means two agents can keep a conversation going - one sends a message, the other replies, and this keeps happening in real-time without opening new connections each time.

# bidirectional_stream.py

import asyncio

import grpc

import agent_pb2

import agent_pb2_grpc

class StreamAgent(agent_pb2_grpc.AgentServiceServicer):

async def Chat(self, request_iterator, context):

async for request in request_iterator:

response = agent_pb2.ChatResponse(message=f”Agent reply: {request.message}”)

yield response

async def run_client():

async with grpc.aio.insecure_channel(‘localhost:50053’) as channel:

stub = agent_pb2_grpc.AgentServiceStub(channel)

async def request_gen():

for i in range(5):

yield agent_pb2.ChatRequest(message=f”Message {i}”)

await asyncio.sleep(0.2)

async for response in stub.Chat(request_gen()):

print(“Received:”, response.message)

if __name__ == ‘__main__’:

asyncio.run(run_client())

This is useful for things like iterative refinement – where one agent keeps improving an output based on feedback from another agent. Very powerful for LLM–based pipelines.

Handling Failures (Don't Skip This)

One thing we learnt the hard way - you need to plan for agent failures from day one. Few patterns that work well:

- Circuit Breaker – After N consecutive failures from an agent, stop calling it for a while instead of hammering it

- Fallback Agent – Have a simpler, rule–based agent ready to take over if the main LLM agent is down

- Retry with Exponential Backoff – n8n has built–in retry nodes that handle this very cleanly

The n8n “Error Trigger” node is particularly useful – it catches any failure in the workflow and lets you route to a backup path automatically.

gRPC vs REST - Is It Worth the Complexity?

Honestly this is a fair question. Here's what I found:

| Protocol | Performance | Cost | Best For |

|---|---|---|---|

| gRPC | Very high - binary, low latency, streaming | Medium (self-hosted infra) | High-throughput AI pipelines, streaming |

| REST | Moderate - JSON over HTTP/1.1 | Low (many managed options) | Simple request-response, public APIs |

| WebSockets | Good for bidirectional | Medium | Real-time but no schema enforcement |

For AI agent workloads – especially where you are passing large data or doing streaming inference – gRPC is clearly better. REST is fine for simple stuff but it starts showing its limitations fast when you scale up.

Scaling and Monitoring

Once the basic system is working, the next challenge is scaling it. A few things that helped me:

- Containerize each agent separately – Deploy on Kubernetes, use Horizontal Pod Autoscaling based on CPU or custom gRPC metrics

- Keep agents stateless – Store any shared state in an external DB (Postgres, Redis) so agents can be freely replicated

- Use a service mesh like Istio if you want intelligent traffic routing across the agent mesh

For monitoring, OpenTelemetry is the way to go. Instrument your gRPC calls and n8n workflow steps to export traces to Jaeger or Grafana Tempo, and metrics to Prometheus. This gives you visibility into latency per A2A call, failure rates, and where exactly your bottlenecks are.

Final Thoughts

We started this exploration a bit skeptically but honestly, the combination of gRPC + n8n + Python is a very solid stack for building multi-agent AI systems. The key things we took away:

- gRPC handles the low–level agent–to–agent communication extremely well – much better than REST for AI workloads

- n8n makes orchestration visual and maintainable – you can change workflow logic without touching agent code

- The agent mesh topology is the right way to build for resilience and scale

- Plan for failure handling from the beginning – it will save you a lot of pain later

If you’re a developer who’s been curious about agentic AI but didn’t know where to start, we suggest just picking a small use case, writing two Python gRPC services, and wiring them together in n8n.